MCP is how agents learn your company's taste

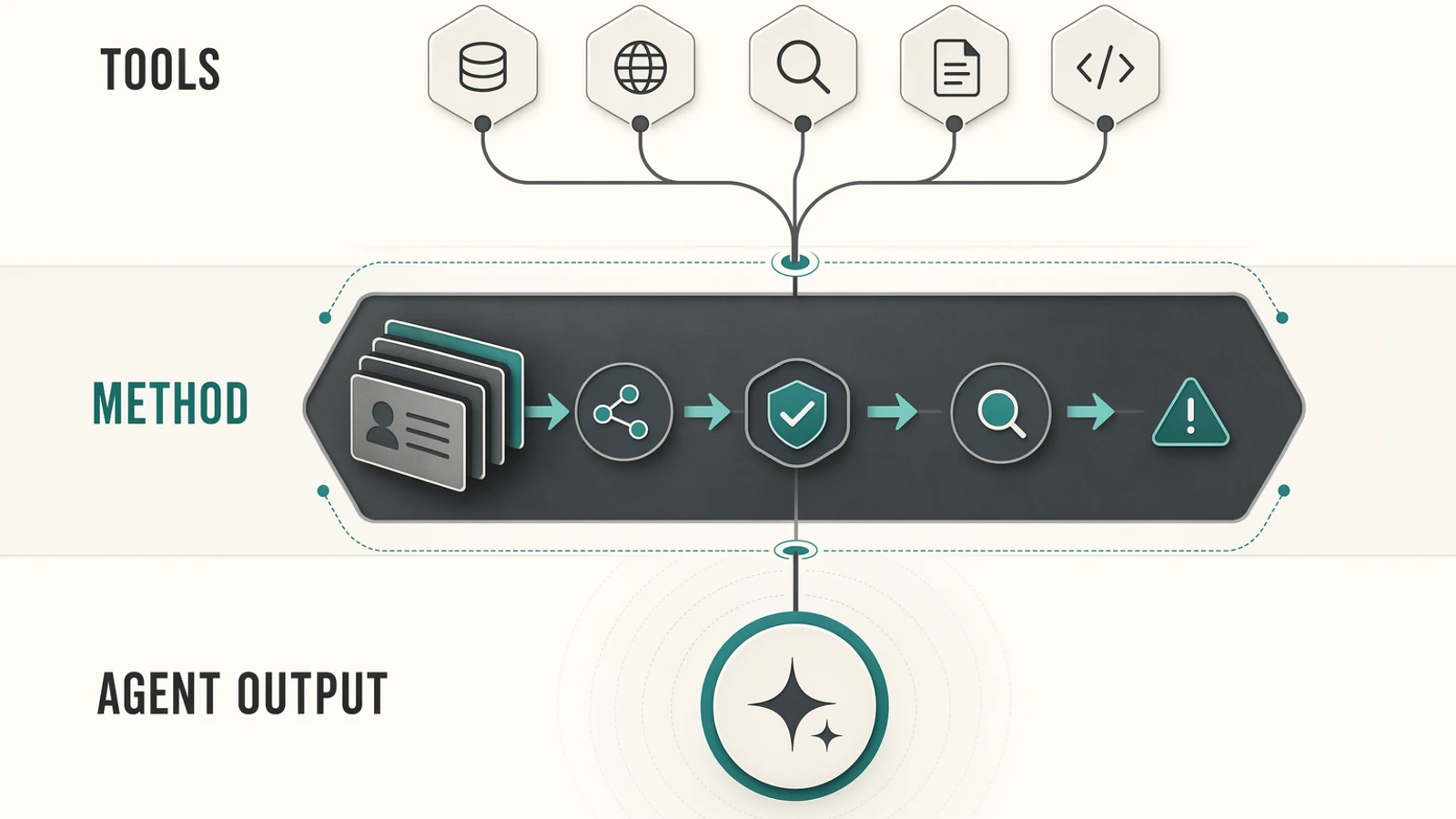

Tool access is the shallow promise of MCP. The real value is packaging company judgment - context, order, permissions, review, and taste - into a surface agents can actually use.

Most MCP demos start with the same move: expose a list of tools.

Search docs. Create ticket. Read customer. Update CRM. Run build. Send message. Open pull request. It feels good because it is concrete. The agent is no longer trapped in a chat box. It can touch the company.

Then the first real workflows arrive, and the difference becomes obvious. The problem was never only whether the model could call the system. The problem was whether it understood how the company expects the work to be done.

We hit this while building Granite. The first MCP server was technically correct: create notes, search notes, update notes. Same vault, same files, same markdown. But agents treated it like a database and left behind loose fragments.

The better version taught a method. Wake up the vault first. Read before writing. Preserve provenance. Link related notes. Compile scattered knowledge instead of dumping another file. The tools did not change much. The behavior did.

That is the part of MCP people underestimate. A good MCP server is not a toolbox. It is how agents learn your company's taste.

tool access is the easy layer

MCP matters because it standardizes how agent clients connect to external systems. A server can expose tools, resources, and prompts through a common protocol instead of forcing every product to build every integration from scratch.

That layer is necessary. It removes glue code. It makes the ecosystem composable. It lets an agent read a repo, query a CRM, inspect a document store, or trigger a workflow without a custom one-off bridge each time.

But raw access is ambiguous. An API knows what can happen. It does not know what should happen.

A ticketing API knows a ticket can be closed. It does not know that this account is strategic, that the same customer complained twice this month, or that the issue is connected to an incident product is still investigating. A deployment API knows a build can go to production. It does not know that Friday afternoon deploys are unusual unless the fix is urgent, reviewed, and reversible.

This is why tool-connected agents often look better in demos than in production. They can reach the system, but they still have to infer the invisible rules. Every company has those rules. Some are written in docs. Most live in habits, names, review rituals, Linear comments, Slack threads, and the memory of people who have been around long enough to know when something feels wrong.

taste is operational, not decorative

"Taste" can sound like a soft word. In agent systems, it is hard infrastructure.

Taste is what tells an agent to gather context before acting. It is the difference between a draft and a send. It is the habit of citing the source of a claim. It is the rule that destructive actions need confirmation, that weak evidence should be marked as weak, and that a risky customer conversation should be escalated instead of polished into a confident answer.

The best human operator in a company is not only the person who knows which buttons exist. It is the person who knows the order, the exceptions, the review points, and the standard of quality. MCP can carry part of that.

Not by making the server a giant brain. The runtime should stay boring. Tools should stay narrow. Permissions should stay explicit. The interesting work happens in the surface around the tools: names, prompts, resources, defaults, response shapes, approval gates, and the sequence of what the agent sees first.

The surface is where the company says: this is how we work here.

one support example is enough

Take customer support.

The weak MCP server exposes four actions: search_tickets, read_customer, draft_reply, issue_refund. That is useful, but it gives the model too much room to improvise. It can answer before understanding the customer. It can propose a refund without seeing the policy. It can close a ticket while missing a churn signal.

The stronger server may call the same underlying APIs, but it exposes a better working path. Before drafting, it loads customer tier, recent tickets, open incidents, internal notes, and refund policy. It separates "draft a reply" from "send a reply". It can propose a refund, but not execute one without approval. It returns the facts used in the answer and flags when the evidence is thin.

The model feels smarter, but the real improvement came from the environment. The server stopped asking the model to guess the company's support discipline from a tool list.

This is also a safety point. MCP sits exactly where text becomes action. The official security guidance focuses on consent, trust boundaries, permissions, and tool safety for a reason. A good MCP server makes risk visible at the moment where an agent is about to do something that matters.

the Granite lesson generalizes

Granite made the problem easy to see because the domain is simple: markdown files, links, search, and a local index. There is no magic model inside Granite. No embedding pipeline. No agent loop. The MCP server is the interface between a general agent and a structured company memory.

The first version exposed storage. The useful version exposed a way of working with knowledge.

That distinction shows up everywhere. A deployment MCP should teach release discipline. A CRM MCP should teach pipeline hygiene. A finance MCP should teach evidence, approvals, and traceability. A knowledge MCP should teach when to read, when to write, when to link, and when to say "this claim is not supported yet."

We saw the same pattern again when we used agents to compile a CIR/CII evidence dossier. The output was useful because the agent did not start by writing. It started by reconstructing the proof chain from Linear, GitHub, docs, and source material. The method mattered more than the model.

That is the shift: MCP should not only expose the company's systems. It should expose the company's operating judgment.

design the server around the next good move

The wrong first question is: "Which endpoints can we expose?"

The better question is: "What would a strong teammate do before touching this system?"

For every workflow, there is usually a short answer. Load this context first. Never perform this action silently. Ask for approval here. Return uncertainty like this. Preserve these source links. Create this artifact at the end. Escalate this class of issue instead of pretending to solve it.

Those decisions are product design, not prompt decoration. They decide whether the agent behaves like a random intern with admin access or like someone operating inside the company's actual process.

The best MCP servers will probably feel a little opinionated. They will make the safe path obvious. They will name dangerous actions in a way that makes danger visible. They will offer workflow tools that bundle the right reads before the write. They will return structured evidence instead of vibes.

This is where the next layer of differentiation lives.

the protocol will become boring. the method will not.

Good protocols become invisible. That is the point. Nobody gets excited about HTTP every morning, but the products built on top of it are not the same. SQL did not make every database product identical. Git did not make every engineering team work the same way.

MCP will likely follow the same path. Connectivity will become expected. The surprising part will not be that an agent can call your tools. The surprising part will be whether it can operate with your standards.

Every company is already full of latent method: how deals move, how incidents are handled, how documents get trusted, how customer risk is detected, how launches are reviewed, how knowledge becomes a deliverable. MCP is one of the cleanest places to turn that method into an interface.

The tool list gets you the demo.

The method gets you work you can trust.

Further reading

- Model Context Protocol specification

- MCP security best practices

- Anthropic introducing MCP

- OpenAI MCP documentation

- Granite on GitHub

- Why I replaced prompts with skills

- Agents don't lack tools. They lack company memory.

- Le vrai sujet avec les agents IA, c'est la formation

- My personal OS lives in a folder. My agents run my company from it.

- Playbook: preparing a CIR/CII dossier with Claude Code